AI GOVERNANCE AND ETHICS FOR BUSINESS

A practical framework for leadership teams building AI policies that enable innovation

Good governance doesn't slow AI adoption down. It gives your people the confidence to move faster. Here's how to build it.

Book a 15-Minute Call

Last updated: March 2026

Who this guide is for

*Last updated: February 2026*

If you're a board director asking what AI governance your organisation needs, or a senior leader trying to write an AI policy that doesn't just block everything, or a compliance professional wondering how AI fits into your existing frameworks, this guide is for you.

We've helped dozens of organisations think through their AI governance. And the pattern we see everywhere is the same: organisations either have no policy at all or they have one that was rushed out early, driven by caution, and so restrictive it actively hinders adoption. Neither position serves the organisation well.

There's a better path. Governance that enables rather than blocks. Policies built from experience rather than anxiety. Ethics grounded in practical reality rather than abstract principle. And the organisations that get governance right tend to adopt AI faster and more confidently than those that either ignore it or over-restrict. That's what this guide covers.

Contents: what you'll find in this guide

- Chapter 1: Why most AI policies fail

- Chapter 2: The right sequence: literacy before governance

- Chapter 3: The real risks you need to understand

- Chapter 4: Building policies that enable

- Chapter 5: Data, privacy, and your obligations

- Chapter 6: Ethics in practice (not just on paper)

- Chapter 7: The environmental dimension

- Chapter 8: Where to start

- Further reading

WHY MOST AI POLICIES FAIL

74% of companies plan to deploy agentic AI within two years, but only 21% have governance ready (Deloitte). That gap should be on every board and leadership team's radar. But how you close it matters as much as closing it.

The reason most AI policies don't land is straightforward: they're written before anyone in the organisation has hands-on experience with the tools. And a policy written without practical understanding almost always ends up in the wrong place.

When legal or IT drafts a policy before people have hands-on experience with the tools, the result is almost always a document that's generic, restrictive, and driven by caution rather than understanding. It says what people can't do without giving meaningful guidance on what they can do. It blocks adoption without enabling anything useful. And it typically sits in a shared folder somewhere, ignored by the people it's supposed to guide. That's not governance. That's a document nobody reads.

The NZ Corrections case is a useful cautionary tale. An explicit AI policy existed on paper but was violated in practice because the training was insufficient. The policy said the right things. But the people using the tools didn't understand those things well enough to follow them. Policy without literacy is policy without teeth. And the flip side is also true: when people understand the "why" behind the guidelines, compliance happens naturally rather than being enforced.

This is why AI governance is Building Block 3 in the Five Strategic Building Blocks framework we use at Ten Past Tomorrow. Not Block 1. Not the starting point. Block 3. Because you need literacy (Block 1) and cultural foundations (Block 2) in place before governance becomes meaningful. That sequencing is one of the most important things we've learned working with organisations on AI adoption.

THE RIGHT SEQUENCE: LITERACY BEFORE GOVERNANCE

We know this feels counterintuitive, especially if you're in a compliance or governance role. The instinct is to establish rules before people start using the tools. But the data and the experience both point in the other direction. And the organisations that follow this sequence tend to end up with better policies and higher adoption.

Policies written by people who don't use AI tools are almost always off-target. They either focus on the wrong risks, over-restrict in areas where the risk is low, or miss genuine concerns entirely. You can't write a good driving manual if you've never been behind the wheel. And you end up with rules that frustrate the people who are trying to use AI productively.

Here's the sequence that works:

First, build AI literacy across the organisation. Get your leadership team using the tools themselves. Get your operational teams trained on real workflows. Give people enough experience to understand what AI actually does, how it works, where it's strong, and where it falls short. This is Building Block 1: AI Literacy and Skills Training. And it doesn't need to take months. Even a few weeks of structured, hands-on experience transforms the quality of the governance conversation.

Second, build the cultural conditions. Make AI part of how the organisation works, not a side project. AI councils, leadership modelling, embedding AI into regular rhythms and meetings. This creates the environment where governance conversations happen between informed people rather than anxious ones. This is Building Block 2: Cultural Change. When AI is part of the culture, governance becomes a natural conversation rather than a top-down imposition.

Third, now write the policies. And write them collaboratively, with people who have hands-on experience with the tools. Include frontline users, not just legal and IT. The policies will be better because they're grounded in practical reality, and they'll be followed because the people who helped write them actually understand what they mean. Collaborative policy-building also surfaces edge cases and practical challenges that a small drafting team would never think of.

This doesn't mean you operate without any guidelines during the literacy phase. Basic data handling principles and confidentiality rules should apply from day one. But a comprehensive AI usage policy? That should wait until your people know what they're governing.

THE REAL RISKS YOU NEED TO UNDERSTAND

There's a video walking through the real risks and concerns of AI that covers this in depth. And one of the most useful things we can do in this section is separate the risks that are genuine from the concerns that are overstated. Getting this distinction right helps you focus your governance where it actually matters.

Data security and privacy. This is real and it deserves serious attention. When employees use AI tools, they're often entering business data into third-party systems. Client information, financial data, strategic plans, proprietary processes. AI and your data explores the juxtaposition of innovation and risk here. Microsoft and LinkedIn found that 40% of employees use AI tools their organisations haven't approved. That's 40% of your workforce potentially uploading proprietary information to systems you don't control. The good news is that enterprise-grade AI tools now offer strong data isolation. The governance challenge is making sure your teams are using the right versions.

Bias and discrimination. AI models reflect the data they were trained on, and that data contains human biases. AI in recruitment is a particularly sensitive area. If you're using AI to screen CVs, assess candidates, or make hiring recommendations, you need to understand that the outputs may encode historical biases around gender, race, age, and background. The AI doesn't know it's being biased. The human overseeing it needs to. But this is also an area where AI can help: when organisations actively monitor for bias in their AI-assisted processes, they often discover and correct biases that existed in their human processes too.

Misinformation and hallucinations. AI generates text that sounds confident whether it's correct or not. It can fabricate citations, invent statistics, and present fiction as fact. For any use case where accuracy matters (legal documents, financial reports, medical information, public communications), human verification is essential. The key is proportional oversight. Not every AI output needs the same level of checking, but high-stakes outputs need rigorous review.

Intellectual property and copyright. AI-generated content raises real copyright questions that are still being worked out in courts globally. Who owns the output? What happens when AI reproduces copyrighted material? What are your obligations when using AI to create content that will be published? The legal landscape is evolving, and organisations need to stay informed. Building a relationship with legal counsel who understands AI is increasingly important.

Over-reliance and skill degradation. If people outsource their thinking to AI entirely, critical skills can atrophy over time. This is less a policy issue and more a cultural one, but it belongs in any governance conversation. The goal is AI augmentation, not AI dependence. And the solution is straightforward: encourage your teams to use AI as a thinking partner rather than a replacement for thinking.

Loss of competitive advantage. If your proprietary knowledge, processes, and customer insights are being fed into public AI tools, you may be giving away more than you realise. Understanding which AI tools are secure (enterprise versions with data isolation) versus which are not (free consumer versions) is a governance essential. A simple approved-tools list goes a long way here.

Need help building governance that works for your organisation?

Book a 15-minute call →BUILDING POLICIES THAT ENABLE

Good AI policies answer the questions employees actually ask. Not just "what am I not allowed to do?" but "how can I use AI in my work, and what do I need to be careful about?" When your policy helps people do their jobs better, adoption and compliance both go up.

Key principles for policies that work:

Start with what's enabled, not what's restricted. Frame the policy as a guide to using AI well, not a list of prohibitions. "You can use AI for research, drafting, analysis, and brainstorming, provided you verify outputs and follow these data handling guidelines" is far more useful than a long list of don'ts. Leading with enablement changes the entire tone of the conversation and signals that the organisation sees AI as an opportunity.

Be specific about data categories. Not all data carries the same risk. Public information is fine to use in any AI tool. Internal documents may require enterprise-grade tools with data isolation. Client data and personal information should have clear rules about what can and can't be entered into AI systems. A simple data classification framework makes this practical rather than theoretical.

Require human oversight, proportional to risk. A first draft of an internal email needs less oversight than a legal document or financial report. Build proportionality into the policy rather than demanding the same level of review for everything. This keeps the process practical and avoids the "just ignore the policy because it's too burdensome" dynamic that kills so many governance frameworks.

Make it iterative. AI tools and capabilities change quarterly. Your policy should be reviewed and updated at least as often. Build in a scheduled review process rather than treating the policy as a one-off document. The best governance frameworks we've seen include a quarterly review cadence and a clear owner responsible for keeping the policy current.

Build it collaboratively. Include people from across the organisation. Frontline users know what the real challenges are. Legal knows the compliance requirements. IT knows the technical constraints. A policy written by any one of those groups alone will be incomplete. The process of building the policy together is almost as valuable as the policy itself, because it creates shared understanding.

The ethical implications of AI aren't just about rules. They're about creating a culture where people think critically about their AI use, raise concerns when something doesn't feel right, and share learnings with colleagues. Policy creates the framework. Culture makes it work.

DATA, PRIVACY, AND YOUR OBLIGATIONS

Data privacy has been a governance concern since AI went mainstream. The US House memo on ChatGPT was an early signal. But the concerns have evolved as AI tools have become more integrated into business operations, and the good news is that the tools for managing data risk have evolved too.

For New Zealand organisations, the Privacy Act 2020 applies to AI use just as it applies to any other data processing. Personal information entered into AI systems is still covered by your privacy obligations. The fact that the processing happens via an AI tool doesn't change your responsibilities. But it doesn't require a whole new compliance framework either. In most cases, your existing privacy practices extend naturally to AI use with a few targeted additions.

Practical guidance for data governance:

Know what your tools do with data. Enterprise versions of AI tools (ChatGPT Enterprise, Google Workspace with Gemini, Microsoft 365 Copilot) typically include data isolation guarantees: your inputs aren't used to train the model, and your data stays within your tenant. Free and consumer versions usually don't offer these guarantees. Understand the difference and set clear rules about which versions are approved. This single distinction eliminates the majority of data security concerns.

Classify your data. Not every piece of information carries the same sensitivity. Create a simple classification system: public, internal, confidential, restricted. Map each category to the tools and practices that are appropriate for it. Keep it simple enough that people can apply it without second-guessing. Three or four categories is enough.

Audit regularly. Run periodic "AI reveal" sessions. Ask your teams to list every AI tool they've used in the past month. This isn't about catching people out. It's about understanding the real landscape of AI use in your organisation, including the shadow AI that's happening whether you know about it or not. These sessions almost always surface useful information and often lead to better tool choices and workflow improvements.

AI and your data explores this in more depth, including practical steps for balancing innovation with protection.

ETHICS IN PRACTICE

We published an open letter about responsible and ethical AI because we believe that how we use AI says something about who we are as professionals and as organisations. But ethics can't be just a pledge. They have to show up in daily practice. And when they do, they become a source of trust and competitive advantage rather than a compliance burden.

The Meta AI policy leak was a watershed moment. The internal documents revealed a level of cynicism about AI safety that was deeply concerning. It reinforced something we already believed: you cannot outsource ethics to the AI providers. They have their own incentives, and those incentives don't always align with your values. Which means the responsibility sits with you. And that's actually a good thing, because it means you can set standards that match your organisation's values.

Practical ethics for AI in business means:

Transparency about AI use. If AI has contributed to a document, a recommendation, or a decision, say so. This doesn't mean labelling every AI-assisted email. It means being honest with clients, stakeholders, and colleagues about when and how AI is being used in consequential work. Transparency builds trust. And in a market where AI use is increasing everywhere, being upfront about it is increasingly seen as a mark of professionalism rather than a weakness.

Human accountability. AI doesn't make decisions. People make decisions, sometimes informed by AI. The human is always accountable for the outcome. This needs to be explicit in how you think about AI governance. If an AI-assisted report contains an error, the person who published it is responsible, not the AI. Clear accountability actually makes people more thoughtful about how they use the tools, which leads to better outcomes.

Fairness and bias monitoring. Actively check for bias in AI outputs, particularly in high-stakes decisions around hiring, performance reviews, lending, and customer treatment. AI can introduce bias that's harder to spot than human bias because it's embedded in statistical patterns rather than conscious prejudice. But the advantage of AI-assisted processes is that they're auditable in ways that purely human processes often aren't. You can review the outputs systematically and identify patterns.

Proportionality of use. Not every task needs AI. And not every AI application is appropriate. Using AI to help draft a report is fine. Using AI to make automated decisions about someone's employment or benefits without human review is something that requires much more care. The level of human oversight should match the level of impact. Getting this calibration right is one of the most important governance decisions you'll make.

Practical AI governance insights, delivered weekly

Sign up to our newsletter →THE ENVIRONMENTAL DIMENSION

This is a governance dimension that deserves more attention than it typically gets.

The hidden environmental costs of AI are significant. Training large AI models requires enormous computing power, which means enormous energy consumption. Running AI queries consumes more energy per query than a standard web search. And the data centres that power AI tools require cooling systems that consume water at scale. These are real costs that responsible organisations should be aware of.

For organisations that take sustainability seriously, AI governance should include environmental considerations. That might mean:

- Choosing AI providers who use renewable energy for their data centres

- Being thoughtful about high-volume AI use (do you need to run that query, or are you prompting casually?)

- Including AI-related emissions in sustainability reporting

- Factoring environmental impact into AI tool selection alongside capability and cost

This doesn't mean avoiding AI. The productivity gains from AI can themselves reduce environmental impact in other areas, fewer flights for research trips, less paper, more efficient resource allocation. But responsible governance means being honest about the full picture, not just the benefits. And as the AI industry matures, the providers that invest in energy efficiency and renewable power will increasingly stand out. Your choice of provider is itself an environmental decision.

WHERE TO START

If you're a board director: ask your management team three questions. What AI tools are employees using today? What data is being entered into those tools? And what governance framework is in place? If the answers are unclear, that's your signal to act. But act by building understanding first, not by rushing to restrict.

If you're a senior leader: start with literacy. Get your own hands on the tools. Bring your leadership team through a structured programme. Then, with that shared understanding as a foundation, build your governance framework collaboratively with people across the organisation. The investment in literacy first pays back in governance that actually works rather than governance that gathers dust.

If you're a compliance professional: resist the urge to lock things down before you understand what you're governing. Shadow AI is already happening. Overly restrictive policies drive it further underground, which is worse for compliance than having a permissive-but-clear framework. Instead, create a framework that brings AI use into the light, with clear guidelines that people can actually follow because they understand the reasoning behind them.

For all audiences: governance is an ongoing practice, not a one-time document. Build in regular reviews. Stay informed about how the technology and the regulatory landscape are evolving. And keep the conversation going. The organisations that handle AI governance well are the ones where it's a living topic, not a dusty policy document. When governance is done well, it becomes an enabler of confident AI adoption rather than a barrier to it.

FURTHER READING FROM ACROSS THE SITE

FURTHER READING FROM ACROSS THE SITE

Risk and Governance

- The real risks and concerns of AI - A comprehensive video walkthrough of genuine AI risks

- Meta's AI policy leak - Why you can't outsource ethics to AI providers

- AI and your data - Balancing innovation and data security

- Data privacy: US House ChatGPT memo - Early privacy governance signals

- GPT-Image-2 and the evidence problem - What it means for finance, HR and insurance teams

Ethics and Responsibility

- Ethical AI pledge - Mark's commitment to responsible AI

- Ethical implications of AI (Kavita Ganesan) - Expert perspectives on AI ethics

- AI in recruitment - Bias risks in AI-assisted hiring

- AI content and copyright - IP and copyright questions

Environmental and Financial Impact

- Hidden environmental costs of AI - Energy, water, and sustainability

- AI for accounting and finance - Financial sector AI implications

- Understanding accounting data with AI - Practical finance applications

- BloombergGPT - Domain-specific AI in finance

ABOUT THE AUTHOR

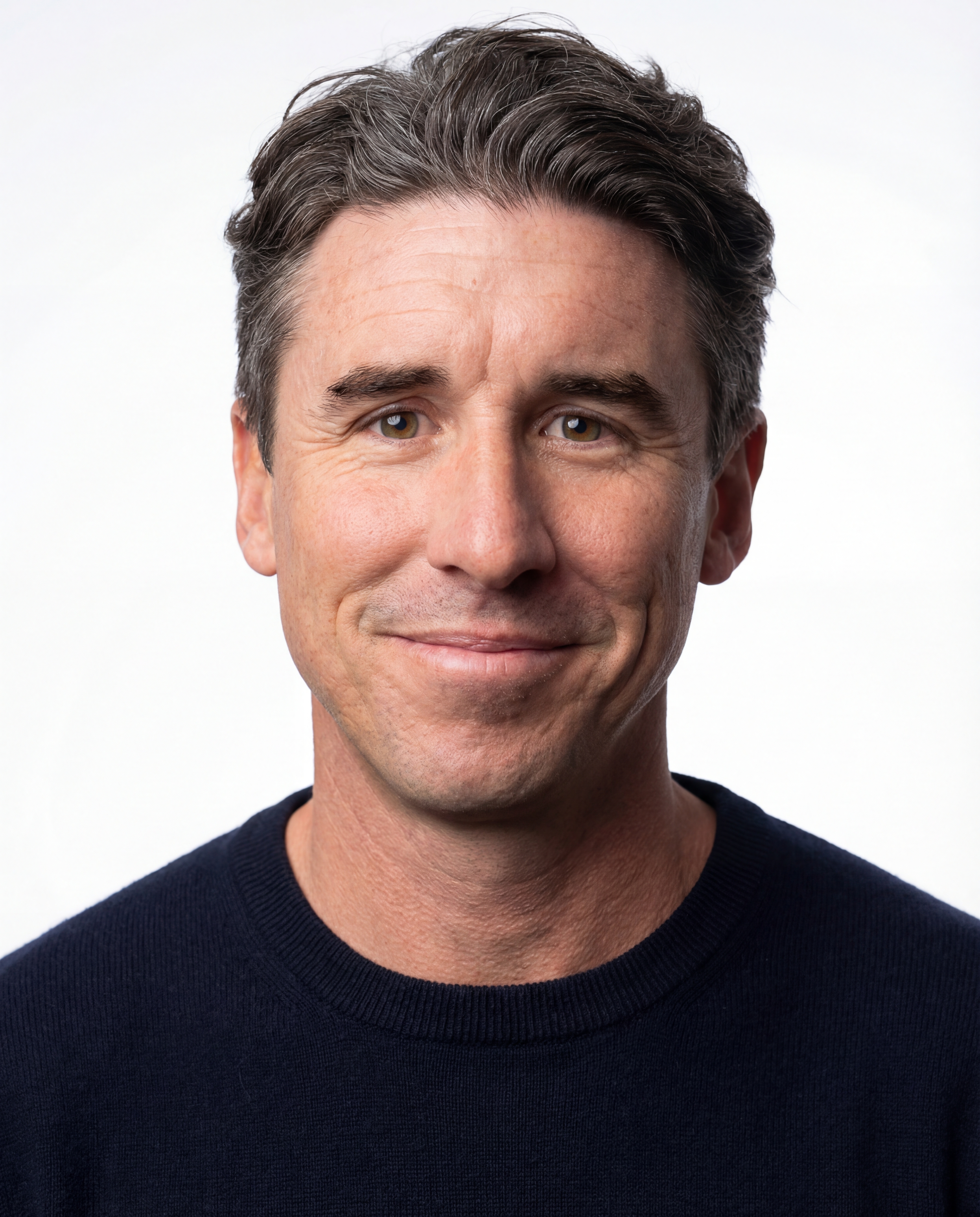

Mark Laurence

Mark is the founder of Ten Past Tomorrow, an AI consultancy and education business based in New Zealand. A trained futurist (Institute for the Future) and practical AI specialist, he works with senior leadership teams to move organisations from AI curiosity to AI capability.

He has worked with 100+ NZ organisations and leads Rapid AI Traction, a four-week programme for senior leadership teams, and The Path to AI Emergence, a ten-month transformation programme.

EXPLORE MORE GUIDES

AI for Senior Leaders

Everything NZ senior leadership teams need to know about AI strategy, adoption, and organisational readiness.

How to Implement AI in Your Organisation

The practical pathway from curiosity to capability. The Five Strategic Building Blocks that actually work.

AI and the Future of Work

What AI actually means for jobs, skills, and the way we work. A balanced, evidence-based look.