AI FOR SENIOR LEADERS

The complete guide to leading your organisation through AI adoption

The organisations making real progress with AI have one thing in common: their senior leadership teams took the time to understand it together. That's the opportunity in front of you right now.

Book a 15-Minute Call

Last updated: March 2026

Who this guide is for

*Last updated: February 2026*

If you're a CEO, founder, or senior leader in a New Zealand organisation and you know AI matters but you're not sure where to start, or you've started but things have stalled, this guide is for you. It's also for board directors who need governance-level clarity on what AI actually means for the organisations they oversee.

We've spent the last three years working with senior leadership teams across New Zealand, helping them move from AI curiosity to AI capability. And the single biggest pattern we see is this: the organisations that treat AI as a leadership and culture opportunity, rather than a technology rollout, are the ones making real progress. The technology is ready. The question is whether leadership teams are ready to lead through it.

So this guide pulls together everything we've learned, everything the research confirms, and everything we think senior leaders need to understand right now. Not the hype. Not the doom. The practical reality of what AI means for how you lead, how your teams work, and where the real opportunities sit for your organisation.

Contents: what you'll find in this guide

- Chapter 1: Why AI is a leadership challenge (and what that means for you)

- Chapter 2: The widening gap between AI-ready and AI-behind organisations

- Chapter 3: What AI-literate leadership actually looks like

- Chapter 4: The ROI question (and why it's the wrong question to start with)

- Chapter 5: How cultural change determines adoption success

- Chapter 6: What the research actually says

- Chapter 7: Where to start

- Further reading from across the site

THE LEADERSHIP CHALLENGE

There's a common approach to AI adoption that goes something like this: buy the tools, train a few people, write a policy, move on. And then six months later, nothing has really changed. The tools are there but they're gathering dust. A few enthusiasts are using them. The organisation as a whole hasn't shifted.

We've been banging this drum for a while now, and the evidence keeps stacking up: AI is fundamentally a leadership and company culture challenge. The biggest barrier to AI adoption in any organisation is whether the senior leadership team understands AI well enough to make informed decisions about it. Not the technology. Not the cost. Not even employee resistance. It's the understanding and engagement of the people at the top.

And that's actually good news, because it means the lever is in your hands. You don't need to wait for better tools or bigger budgets. You need your leadership team to get hands-on with AI and build a shared understanding of what it means for your organisation.

We wrote about this in detail when looking at why AI adoption stalls in organisations. The Toyota parallel is instructive. In the 1980s, American car manufacturers sent teams to study Toyota's lean manufacturing. They came back with the processes and the frameworks. And they still couldn't replicate the results. Why? Because lean manufacturing wasn't a process. It was a culture. AI is the same. The organisations getting results aren't just deploying tools. They're building a culture where AI is woven into how people think about their work.

You can't lead what you don't understand. And when leadership teams take the time to understand AI together, they create the conditions for everyone else to follow. BCG's research confirms this: employee-centric organisations are seven times more likely to reach AI maturity. But that starts with the senior leadership team engaging deeply enough to set the right conditions.

THE WIDENING GAP

There's a pattern emerging that every leadership team in New Zealand should be paying attention to. The gap between organisations that are making real progress with AI and those that haven't started is getting wider. And it's accelerating.

The organisations pulling ahead aren't doing it because they have better tools or bigger budgets. They're doing it because they've redesigned how the organisation operates. They've embedded AI into workflows, decision-making, and daily routines rather than treating it as a set of tools that individuals might use.

A 2024 Wharton study surveyed over 800 senior leaders and found that 72% now use AI weekly. And the business outcomes are striking. AI leaders are seeing 1.7 times revenue growth, 3.6 times total shareholder return, and 1.6 times EBIT margin improvement over their peers. These aren't marginal gains. And the gap is widening because the learning compounds. Every month an organisation spends integrating AI into real work, it builds capability that competitors who haven't started can't shortcut.

So if you're thinking "we'll get to it eventually," we'd gently push back. Not because you're behind in some catastrophic way, but because the opportunity cost of waiting is real and growing. The organisations that started six months ago aren't just six months ahead. They've compounded six months of learning, workflow redesign, and cultural adaptation. That's hard to catch up to.

The argument that AI might be a bubble misses this point entirely. Even if the hype cycle corrects (and it will, as all hype cycles do), the underlying capability is already here and already changing how work gets done. The organisations building AI capability now will carry that advantage regardless of what happens with valuations or the news cycle. The opportunity is real, and it rewards early action.

WHAT AI-LITERATE LEADERSHIP ACTUALLY LOOKS LIKE

Let's be clear about what we mean here, because "AI literacy" can sound like everyone needs to learn to code. That's not it. We're talking about something more fundamental. We're talking about the kind of understanding that lets a leadership team make good strategic decisions about AI, set the right expectations, and model the behaviour they want to see across the organisation.

An AI-literate senior leader understands three things simultaneously:

The strategic implications. How AI fits into your business model, your competitive position, your industry dynamics. What it means for your value proposition. Where the risks are and where the opportunities sit. This is the strategic altitude that only your senior team can fly at, and it's where AI understanding has the highest leverage. When you can see how AI reshapes your industry, you make better decisions about everything else.

The practical reality. What the tools actually do. Not from a demo or a vendor pitch, but from using them yourself, with your own work, in your own context. This is precisely why a study at Stanford found that when doctors used AI diagnostic tools, their performance actually got worse. Not because the AI was bad, but because the doctors didn't understand it well enough to use it properly. They either over-relied on it or ignored its output entirely. The same dynamic plays out in every industry. Leaders who've used the tools can tell the difference between a genuine capability and a vendor pitch. Leaders who haven't are flying blind.

The human dimension. How your people feel about AI. The excitement, the curiosity, the scepticism, the anxiety about job security. All of those reactions are valid, and they all need to be acknowledged. There's a piece on introducing AI to teams without creating panic, and the core insight still holds: empathy and transparency beat mandates every time. When leaders show they've engaged with AI themselves and can talk openly about both the possibilities and the uncertainties, it creates psychological safety for everyone else to engage too.

When we look at the organisations making real progress, the common thread isn't budget or industry. It's that the senior leadership team personally understands AI well enough to make good decisions about it. They can set realistic expectations. They can model the behaviour they want to see. And they can have informed conversations with their teams about what AI means for the work ahead.

Shopify is the case study we keep coming back to. When Tobi Lutke published his CEO memo making AI usage part of performance reviews and company culture, it sent a signal that reverberated through the entire organisation. Non-engineers became the fastest-growing AI user group. That's leadership modelling in action. And it didn't require technical expertise. It required a leader who'd used the tools himself, understood the potential, and was willing to set a clear direction.

Want to develop these capabilities in your leadership team?

Book a 15-minute call →THE ROI QUESTION

We get this question in almost every conversation with a leadership team: "What's the ROI?"

And we get why it's asked. You're responsible for deploying capital wisely. You need to justify investments to boards. You need to show results. So the instinct to reach for an ROI calculation makes total sense.

But here's our honest take: ROI is the wrong first question.

Not because ROI doesn't matter. Of course it does. But because starting with ROI creates a framing that works against you. It positions AI as a cost to justify rather than a capability to develop. And that framing leads organisations down a predictable path: run small pilots, measure them against unrealistic expectations, conclude it didn't work, and shelve the whole thing. We've seen this pattern play out multiple times, and it almost always comes down to the framing, not the technology.

The New York Times ran a piece saying AI wasn't boosting productivity. And they were right about the data but wrong about the conclusion. The organisations seeing real productivity gains are the ones that have redesigned work around what AI makes possible. They've rethought workflows, reallocated time, and changed how teams collaborate. The organisations that bolted AI onto existing processes and expected magic? They're the ones the New York Times was writing about.

The research backs this up. 84% of organisations haven't redesigned a single job around AI (Deloitte, 2026). 85% are stuck at task-level AI use (BCG). 95% of AI pilots fail (MIT/McKinsey). And in every case, the root cause traces back to approach rather than technology. The pilots that succeed are the ones where leaders understood AI well enough to ask the right questions, design the right experiments, and create the right conditions for change.

The right first question isn't "What's the ROI?" It's "Do we understand this well enough to make good decisions about it?" And for most leadership teams, the honest answer is not yet. That's not a criticism. It's an invitation. The understanding comes faster than you think, and once it's there, the ROI conversations become genuinely productive.

HOW CULTURAL CHANGE DETERMINES ADOPTION SUCCESS

This is the part that catches organisations by surprise.

You can buy the tools. You can write the policies. You can even run the training. But if AI doesn't become part of how the organisation thinks, meets, hires, reviews performance, and makes decisions, it stays as a side project. Individual enthusiasts use it. The organisation doesn't. And the potential sits there unrealised.

Cultural change is Building Block 2 in the framework we use at Ten Past Tomorrow, and it's the broadest of the five blocks for good reason. It covers everything from embedding AI into organisational rhythms (one-on-ones, team meetings, all-hands, job descriptions, KPIs, performance reviews) to leadership modelling, AI councils and champions, and the psychological management of how people react to a technology that feels both exciting and uncertain. It's not one initiative. It's dozens of small shifts in how the organisation operates day to day.

The grassroots AI uprising is already happening in your organisation, whether you know about it or not. Microsoft and LinkedIn found that 75% of knowledge workers are already using AI tools, often without employer approval. That's shadow AI. And while it sounds like a risk (and it can be), it's also a signal. It tells you there's demand. People want to use these tools. They're finding them helpful. The opportunity is to bring that energy into the open, give it structure and support, and let it spread safely across the organisation.

Every employee you have is now a potential AI innovator. The question is whether your culture enables that or suppresses it. The electricity analogy is useful here. When electricity first arrived, companies used it to power the same machines that steam had powered. It took decades before they redesigned their factories around what electricity actually made possible. AI is at the same inflection point. The organisations that will pull ahead are the ones that redesign their operations around AI's actual capabilities, not just bolt it onto existing workflows. And that redesign is a cultural shift as much as a technical one. It requires permission, experimentation, shared learning, and leaders who are visibly engaged with the technology themselves.

Ready to build AI capability across your senior team?

Explore Rapid AI Traction for Senior Leadership Teams →WHAT THE RESEARCH ACTUALLY SAYS

We try to stay evidence-based in everything we write and teach, so here are the numbers that matter most for senior leaders right now:

- 84% of organisations haven't redesigned a single job around AI (Deloitte, 2026)

- 95% of AI pilots fail (MIT/McKinsey)

- 85%+ of organisations are stuck at task-level AI use (BCG)

- 7x more likely to reach AI maturity if your approach is employee-centric (BCG)

- 1.7x revenue growth for AI leaders vs. peers (BCG)

- 72% of senior leaders now use AI weekly (Wharton, 2024)

- 75% of knowledge workers using AI, often without employer approval (Microsoft/LinkedIn)

- 14% to 85% AI fluency at the San Antonio Spurs by embedding training in daily work (OpenAI)

That last one is worth pausing on. The San Antonio Spurs took their organisation from 14% AI fluency to 85% by embedding AI training into daily work rather than running it as a separate initiative. That's the kind of transformation that's possible when leadership commits and the culture supports it.

We reviewed the 2024 Wharton study in detail and the 2023 AI Readiness Report and KPMG's generative AI survey. The pattern across all of them is consistent: the technology works. The organisations getting results are the ones with leadership buy-in, cultural readiness, and a willingness to redesign how work actually gets done.

The argument that AI is underhyped, not overhyped, is one we've been making for two years now. And the data keeps reinforcing it. The gap between what AI can do and what organisations are actually doing with it is enormous. That gap represents a real opportunity for leadership teams willing to close it. But it does require action, not just awareness.

WHERE TO START

So what do you actually do with all this?

We've worked with enough leadership teams to know that the path from "we should do something about AI" to "we're making real progress" is rarely linear. But there are a few things that consistently move the needle, and they're more accessible than you might think.

First, get your own hands dirty. Use the tools yourself. Not in a demo. Not in a workshop. In your actual work. Write emails with AI. Summarise reports. Prepare for meetings. Challenge your own assumptions about what the tools can and can't do. AI rewards humans with a questioning mindset, and the only way to develop that mindset is through direct experience. Even 30 minutes a day for a couple of weeks will fundamentally change how you think about AI's role in your organisation.

Second, invest in your leadership team's AI literacy. Not a one-off lunch-and-learn. A structured programme that gives every member of your SLT a shared language, a shared framework, and individual proficiency with the tools. The power of doing this as a team is that you build a common understanding and can make decisions together from an informed position. This is exactly what Rapid AI Traction is designed to do in four weeks.

Third, start building the cultural conditions. Identify your AI champions. Create space for experimentation. Talk about AI in your regular meetings. Model the behaviour you want to see. Celebrate early wins, even small ones, because they create momentum. This is the work of Building Block 2, and it can start in parallel with training. In fact, it works best when it does.

Fourth, resist the urge to start with policies. We know it's tempting, especially if legal or compliance is pushing. But policies written before people understand AI are almost always too restrictive and end up blocking the experimentation you actually need. Train first, build experience, and then write policies collaboratively with people who've actually used the tools. Those policies will be more balanced, more practical, and better received.

The organisations we see making the fastest progress are the ones where the CEO or MD has personally engaged with AI, brought their leadership team along, and created the cultural conditions for adoption. It sounds simple. And the framework is simple. But the execution requires commitment, consistency, and a willingness to learn alongside your team. The good news is that it works, and the results compound quickly once you start.

FURTHER READING FROM ACROSS THE SITE

FURTHER READING FROM ACROSS THE SITE

We've written extensively about AI and leadership across this site. Here are the most relevant pieces, grouped by theme:

Leadership and Strategy

- Why AI adoption stalls without AI-literate senior leadership teams - The Toyota manufacturing parallel and why SLT literacy is the critical bottleneck

- AI demands visionary leaders - The Moderna case study and the AI Native/AI Emergent/Obsolete framework

- What if this was your CEO's new AI policy? - Shopify's AI mandate and what it means for leadership culture

- The future is human vision plus AI precision - Cambridge and Stanford research on AI-augmented leadership

- Anthropic built an AI too powerful to release. Here's what matters more. - Claude Mythos, the Microsoft study, and why AI literacy is now the leadership call

The Business Case

- The AI ROI problem (that isn't a problem at all) - Why ROI is the wrong first question

- Wharton study: AI is a true business asset - 800+ senior leaders surveyed

- NY Times is wrong about AI productivity - The real reason AI isn't boosting productivity yet

- Is AI a bubble? - Evidence-based counter to the bubble narrative

- AI is underhyped, not overhyped - Why the gap between potential and reality is an opportunity

Culture and Adoption

- Every employee is now an AI innovator - Bottom-up adoption and why it matters

- The grassroots AI uprising - Shadow AI and what it tells you about demand

- The CEO who let 80% of staff go - A cautionary case study

- The AI dismissal reflex - Why people give up on AI too early

Historical Perspective

- AI lessons from Copernicus and Gutenberg - Historical parallels for technology adoption

- Learning from Apple's problem - What Apple's AI challenge means for every business

- The Stanford AI Index: key insights - Industry outpacing academia

ABOUT THE AUTHOR

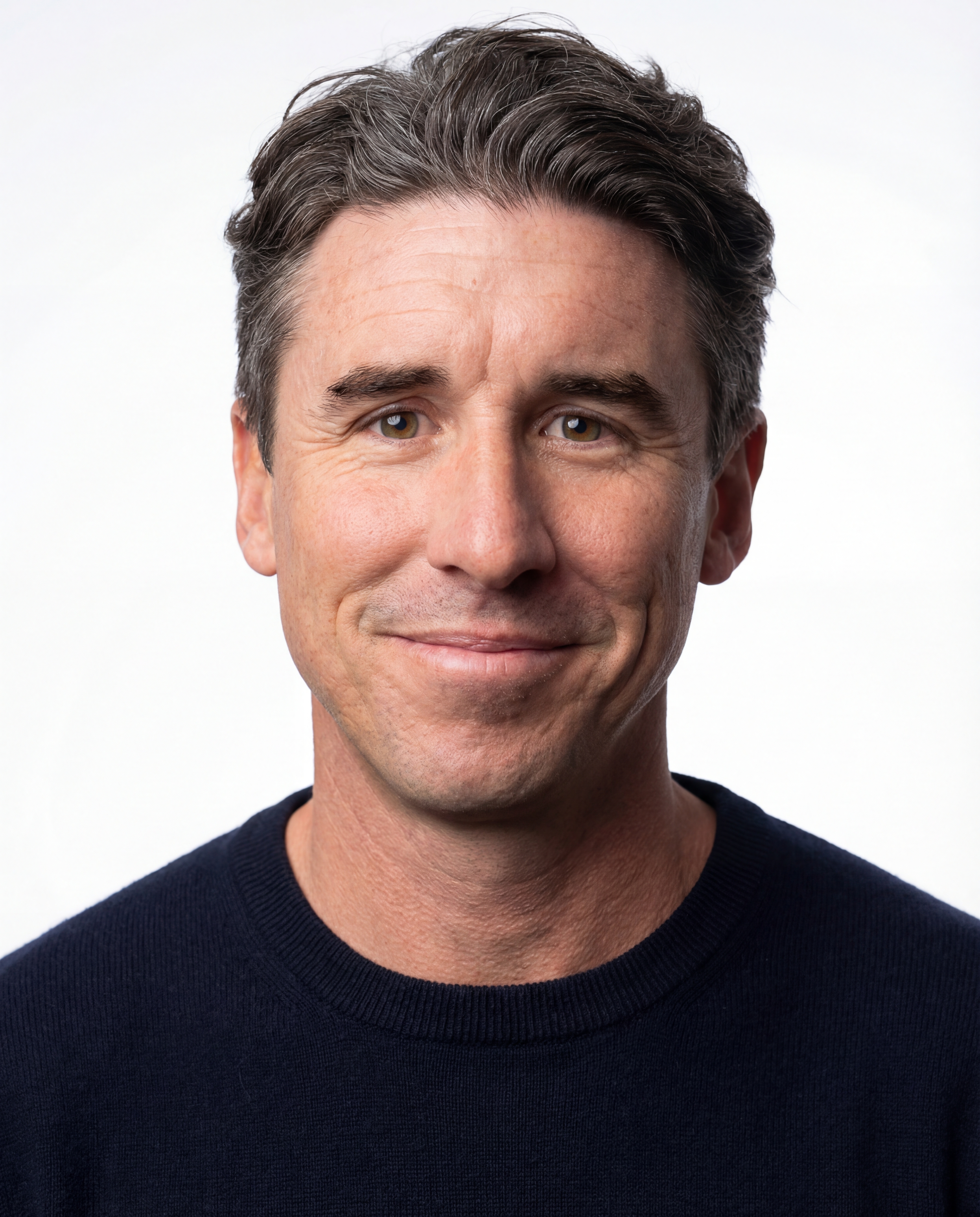

Mark Laurence

Mark is the founder of Ten Past Tomorrow, an AI consultancy and education business based in New Zealand. A trained futurist (Institute for the Future) and practical AI specialist, he works with senior leadership teams to move organisations from AI curiosity to AI capability.

He has worked with 100+ NZ organisations and leads Rapid AI Traction, a four-week programme for senior leadership teams, and The Path to AI Emergence, a ten-month transformation programme.

EXPLORE MORE GUIDES

How to Implement AI in Your Organisation

The practical pathway from curiosity to capability. The Five Strategic Building Blocks that actually work.

AI and the Future of Work

What AI actually means for jobs, skills, and the way we work. A balanced, evidence-based look.

AI Governance and Ethics for Business

How to build AI policies that enable innovation rather than block it.